In March 2026, Claude, GPT, and Gemini are all improving quickly, but each family is optimising for slightly different strengths. Anthropic is pushing Claude toward better coding and agentic work, OpenAI is shipping GPT-5.4 and smaller variants for high-volume use, and Google is refining Gemini 3.1 Pro and related product updates for reasoning-heavy workflows and broad ecosystem integration.

What each family is saying about itself in 2026

Anthropic’s Claude Opus 4.5 is framed as the best model for coding, agents, and computer use. OpenAI’s GPT-5.4 is presented as a smarter, faster, more useful model with built-in thinking and new mini/nano variants for efficient workloads. Google’s Gemini 3.1 Pro is focused on complex problem solving and improved reasoning, with broader product updates across the Gemini app and ecosystem.

The practical benchmark differences

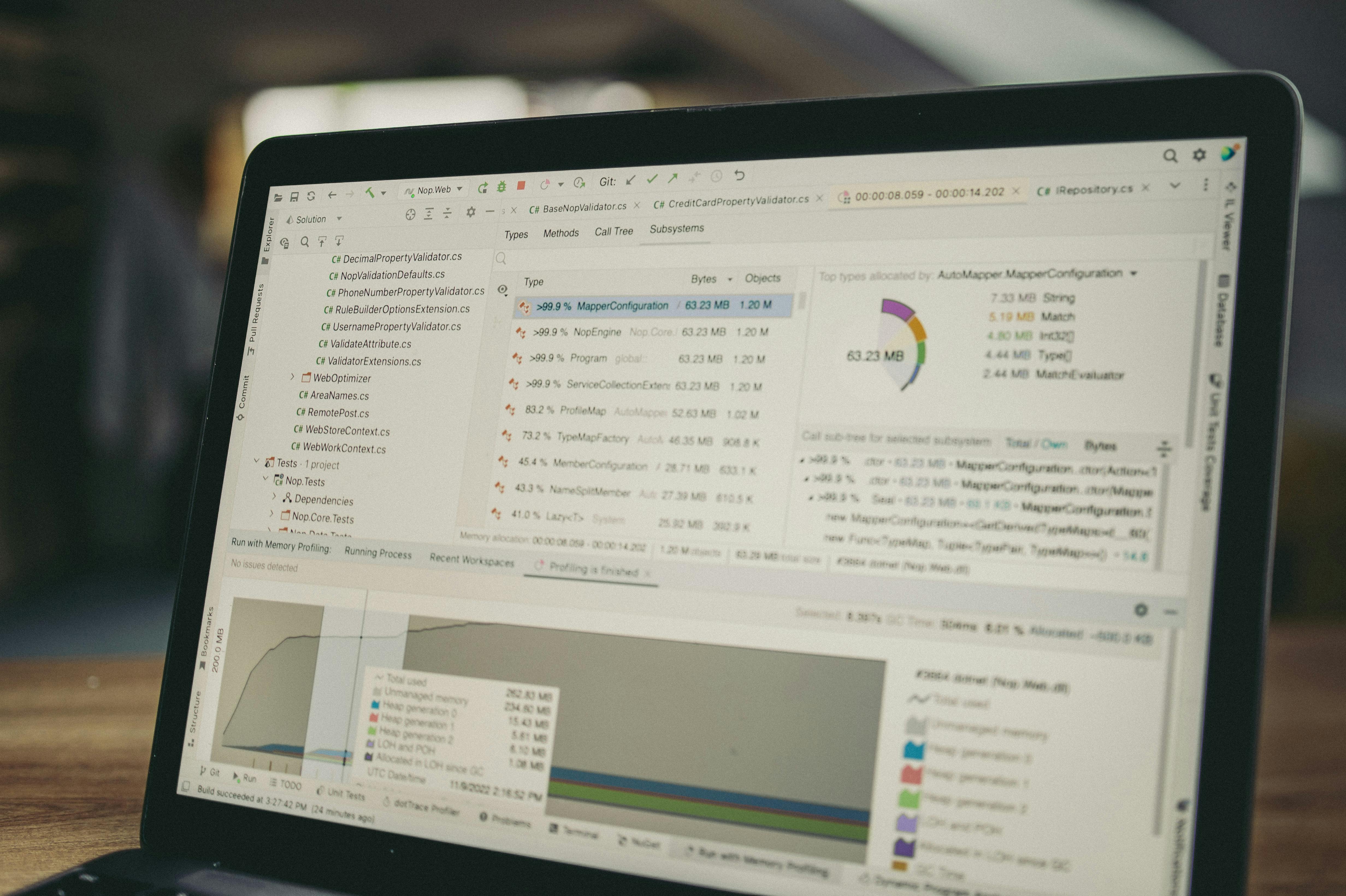

For product teams, the most useful benchmark categories are coding quality, agent reliability, long-context consistency, multimodal understanding, and cost-per-task. Claude often feels strongest for careful coding and longer reasoning chains. GPT-5.4 is attractive for general product workflows and subagent-style use. Gemini 3.1 Pro is increasingly relevant for complex reasoning and Google-centric workflows, especially where search, documents, or workspace integration matter.

How teams should choose in practice

The best team setup in 2026 is often multi-model. Use Claude when you want strong code review, careful reasoning, or agent workflows. Use GPT when you want a broad default model with strong performance and smaller variants for scale. Use Gemini when your workflow benefits from Google’s ecosystem or deep reasoning on structured tasks. The model that wins on a leaderboard may not be the model that wins your actual production workflow.

Benchmarking for real SaaS use

If your business serves clients in the UK, UAE, Saudi Arabia, Pakistan, the US, or Australia, benchmark all three models on the tasks your team actually performs: product specs, customer support, code review, onboarding copy, analytics summaries, and multilingual communication. Measure latency, consistency, and human edit time — not just raw output quality.

MoodBook Studio recommendation

We recommend benchmarking by workflow, not brand loyalty. In 2026, the best model stack is the one that reduces editing time and improves shipping speed. Claude, GPT, and Gemini all have strong cases, but the winner is the one that performs best on your real tasks.

Sources and release notes

Frequently asked questions

- Which model is strongest for coding in 2026?

- Claude Opus 4.5 is currently positioned very strongly for coding and agent work, while GPT-5.4 and Gemini 3.1 Pro are also competitive depending on the task.

- Should product teams use one AI model or several?

- Several is usually better. Different models win on different workflows, so multi-model routing is often the most practical approach.

- What should we benchmark first?

- Start with your most common production tasks: code refactors, support answers, product writing, and structured analysis. Those reveal the most useful differences.